NPTEL Deep Learning for Computer Vision Week 6 Assingment Answers 2024

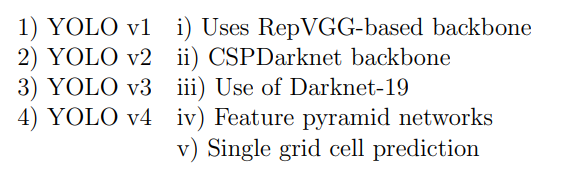

1. Match the following:

- 1→v, 2→i, 3→iv, 4→ii

- 1→iv, 2→i, 3→iii, 4→ii

- 1→v, 2→iii, 3→i, 4→ii

- 1→v, 2→iii, 3→iv, 4→ii

Answer :- For Answers Click Here

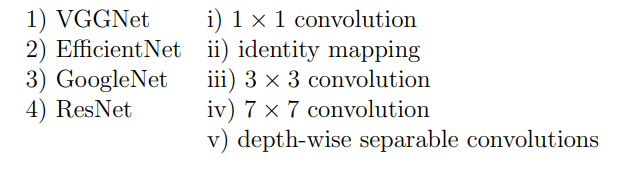

2. Match the following:

- 1→iv, 2→ii, 3→v, 4→i

- 1→iv, 2→iii, 3→v, 4→ii

- 1→iv, 2→v, 3→ii, 4→iii

- 1→iii, 2→v, 3→i, 4→ii

Answer :- For Answers Click Here

3. The ConvNEXT model is an evolution of convolutional neural networks (CNNs), designed to bridge the performance gap with vision transformers (ViTs). One of the key architectural innovations in ConvNEXT is the introduction of depthwise convolution blocks inspired by transformers. Which of the following statements regarding the architectural design of ConvNEXT is incorrect?

- ConvNEXT employs depthwise convolutions followed by pointwise convolutions, similar to the MobileNet architecture, but with additional layer normalization and GELU activation.

- ConvNEXT replaces the traditional ResNet bottleneck block with a modified block that removes the ReLU activation function in favor of more non-linear operations like Swish.

- The ConvNEXT model increases the size of the convolutional kernels to 7×7 to better capture long-range dependencies, mimicking the self-attention mechanism in transformers.

- ConvNEXT introduces LayerNorm after the depthwise convolution and before the pointwise convolution to stabilize the training dynamics.

Answer :- For Answers Click Here

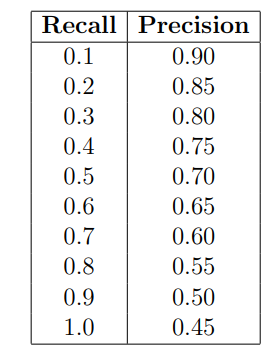

4. Consider an object detection system evaluated on a dataset consisting of 1000 images. The system makes 1500 predictions across these images, and for each image, there are annotated ground truth bounding boxes. The system’s precision-recall curve is calculated, and the precision at different recall levels for one of the classes is as follows:

Calculate the Average Precision (AP) for this class using the 11-point interpolation method, which averages the precision values at recall levels {0.0, 0.1, 0.2, …, 1.0}. The precision at recall 0.0 can be assumed to be 1.0.

Additionally, the system’s AP values for the other two classes are as follows:

-AP for class 2: 0.78

-AP for class 3: 0.72

Based on these AP values, what is the mean Average Precision (mAP) across all three classes?

- 0.70

- 0.69

- 0.73

- 0.76

Answer :-

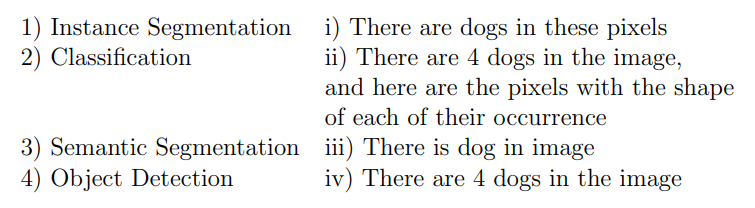

5. Match the following computer vision tasks to situations:

- 1 →iv, 2 →iii, 3 →ii, 4 →i

- 1 →ii, 2 →iii, 3 →i, 4 →iv

- 1 →iv, 2 →iii, 3 →i, 4 →ii

- 1 →ii, 2 →iv, 3 →iii, 4 →i

Answer :-

6. Which one of the following statements is false?

- EfficientNet employs a compound scaling method to improve model efficiency and accuracy across different scales.

- MobileNet utilizes depthwise separable convolutions to reduce the computational complexity of the network.

- DenseNet only connects each layer to the last layer in a feedforward fashion to address the vanishing gradient problem.

- SeNet incorporates spatial squeeze-and-excitation blocks to enhance the representation power of the network by explicitly modeling channel-wise dependencies.

Answer :- For Answers Click Here

7. Which one of the following object detection networks uses an ROI pooling layer?

- Fast R-CNN

- R-CNN

- YOLO

- All of the above

Answer :-

8. Consider two 12×12 bounding boxes (one on the upper left and one on the lower right) in an image with an overlapping region of 8 × 8. The Intersection over Union (IoU) score between the two boxes is (choose the closest value):

- 10%

- 18%

- 28%

- 35%

Answer :-

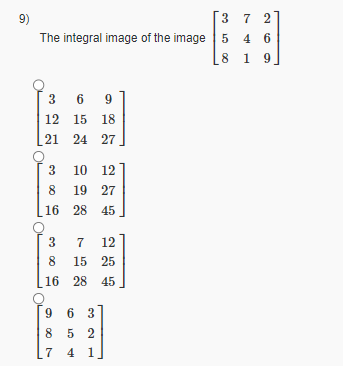

9.

Answer :-

10. Which of the following is true? Select all possible answers:

- The size of the effective receptive field reduces as we go deeper in Convolution Neural Network.

- For transfer learning, if the source and target datasets are dissimilar and the target dataset size is small, it is better to only use source task model’s general layers and append a new classifier to it instead of tuning on source task model’s specific layers

- The number of FLOPS required by EfficientNetB6 is less than the number of FLOPS required by EfficientNetB3

- When using 1 × 1 convolution on a feature map, it is good practice to apply padding since 1 × 1 reduces the height and width of feature map.

- In 3D convolution, the kernel moves in 3 directions and the input data is 4-dimensional

Let input have size Df×Df×M where Df=128 and M=16 and output feature map (after passing input through conv layer) has Df×Df×N size where N=32. Assume padded convolution. Let width of the square kernel in conv layer be k where k=5 (Ignore the bias term in the calculation).

Calculate the number of parameters and computational cost for this convolution layer.

Answer :- For Answers Click Here

11. Number of Parameters:

Answer :-

12. Computational Cost:

Answer :-

Using the same dimensions specified in the previous question, calculate the number of parameters and computational cost, but make use of Depthwise Seperable convolution instead of standard convolution.

13. Number of parameters for depthwise convolution:

Answer :- For Answers Click Here

14. Computational Cost for for depthwise convolution:

Answer :-

15. Number of parameters for pointwise convolution:

Answer :-

16. Computational cost for for pointwise convolution:

Answer :- For Answers Click Here