NPTEL Introduction To Machine Learning – IITKGP Week 5 Assignment Solutions

NPTEL Introduction To Machine Learning – IITKGP Week 5 Assignment Answer 2023

1. What would be the ideal complexity of the curve which can be used for separating the two classes shown in the image below?

a. Linear

b. Quadratic

c. Cubic

d. insufficient data to draw a conclusion

Answer :-For Answer Click Here

2. Suppose you are using a Linear SVM classifier with 2 class classification problem. Now you have been given the following data in which some points are circled red that are representing support vectors.

If you remove the following any one red points from the data. Will the decision boundary change?

a. Yes

b. No

Answer :- For Answer Click Here

3. What do you mean by a hard margin in SVM Classification?

a. The SVM allows very low error in classification

b. The SVM allows high amount of error in classification

c. Both are True

d. Both are False

Answer :- For Answer Click Here

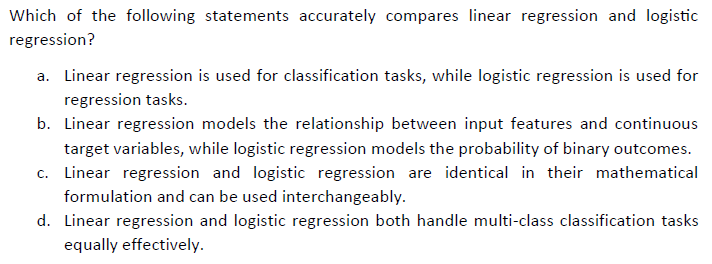

4.

Answer :- For Answer Click Here

5. After training an SVM, we can discard all examples which are not support vectors and can still classify new examples?

a. True

b. False

Answer :- For Answer Click Here

6. Suppose you are building a SVM model on data X. The data X can be error prone which means that you should not trust any specific data point too much. Now think that you want to build a SVM model which has quadratic kernel function of polynomial degree 2 that uses Slack variable C as one of it’s hyper parameter.

What would happen when you use very large value of C (C->infinity)?

a. We can still classify data correctly for given setting of hyper parameter C.

b. We can not classify data correctly for given setting of hyper parameter C

c. None of the above

Answer :- For Answer Click Here

7. Following Question 6, what would happen when you use very small C (C~0)?

a. Data will be correctly classified

b. Misclassification would happen

c. None of these

Answer :- For Answer Click Here

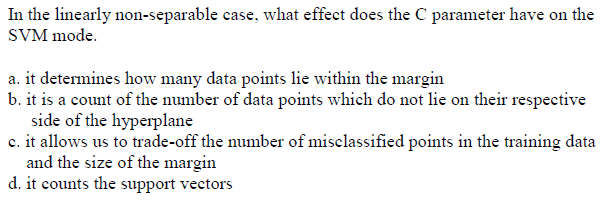

8.

Answer :- For Answer Click Here

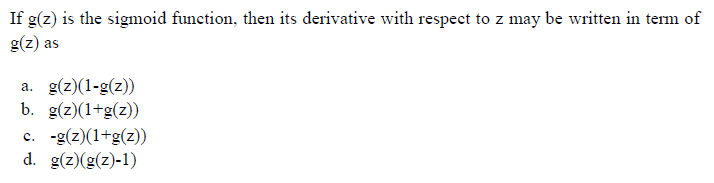

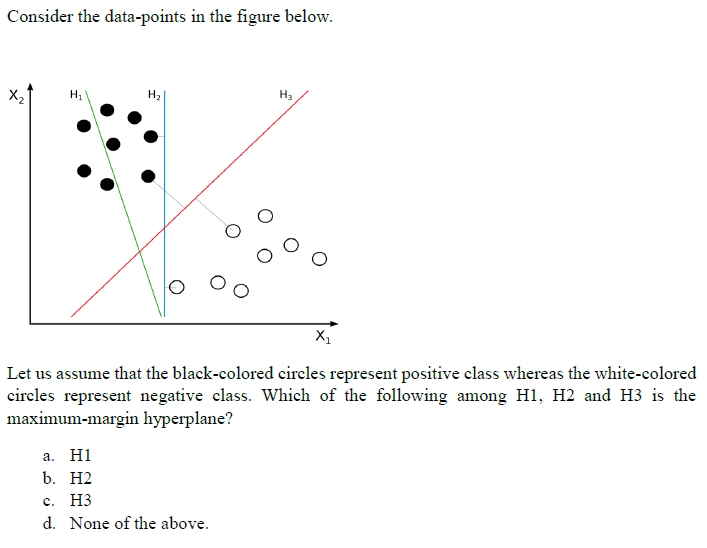

9.

Answer :- For Answer Click Here

10. What type of kernel function is commonly used for non-linear classification tasks in SVM?

a. Linear kernel

b. Polynomial kernel

c. Sigmoid kernel

d. Radial Basis Function (RBF) kernel

Answer :- For Answer Click Here

11. Which of the following statements is/are true about kernel in SVM?

- Kernel function map low dimensional data to high dimensional space

- It’s a similarity function

a. 1 is True but 2 is False

b. 1 is False but 2 is True

c. Both are True

d. Both are False

Answer :- For Answer Click Here

12. The soft-margin SVM is prefered over the hard-margin SVM when:

a. The data is linearly separable

b. The data is noisy

c. The data contains overlapping point

Answer :- For Answer Click Here

13.

Answer :- For Answer Click Here

14. What is the primary advantage of Kernel SVM compared to traditional SVM with a linear kernel?

a. Kernel SVM requires less computational resources.

b. Kernel SVM does not require tuning of hyperparameters.

c. Kernel SVM can capture complex non-linear relationships between data points.

d. Kernel SVM is more robust to noisy data.

Answer :- For Answer Click Here

15. What is the sigmoid function’s role in logistic regression?

a. The sigmoid function transforms the input features to a higher-dimensional space.

b. The sigmoid function calculates the dot product of input features and weights.

c. The sigmoid function defines the learning rate for gradient descent.

d. The sigmoid function maps the linear combination of features to a probability value.

Answer :- For Answer Click Here

| Course Name | Introduction To Machine Learning – IITKGP |

| Category | NPTEL Assignment Answer |

| Home | Click Here |

| Join Us on Telegram | Click Here |